Ethical Practices

Our Ethical Practices

INTRODUCTION

Ethical practices in EduTech

In 2022 Human Rights Watch investigated 164 government-endorsed EdTech products and concluded that they “put at risk or directly violated children’s privacy and other children’s rights, for purposes unrelated to their education.”

This study highlighted a potential lack of governance in the exploding use of technology in education, particularly during the time of COVID school lockdowns. This study followed concerns being raised by a group of US Senators that school technology may be being used for surveillance and may be misused for disciplinary purposes.

In recent times, we’ve seen policy makers and regulators responding to these and other concerns about the safety, security, rights of parents, rights of students and the potential for misuse of technology in education. This is particularly so in the US where a stream of new laws are being proposed and passed.

Whilst perspectives on proper practices do differ markedly we find that all stakeholders and advocates in these debates have the good intentions of protecting and supporting children. As a provider of student safety and wellbeing technology, we find our products sit in the middle of these dynamics.

Furthermore, given our platform seeks to bring together schools, students and their parent communities, matters of sharing, security, consent, bias, agency and so on are fundamental.

The education sector and thus safety technology providers like us face a unique set of circumstances when considering ethics and technology.

Whilst safety tech providers provide services under contracts to schools, student safety, reporting and ethical considerations can pierce and supersede these arrangements.

Further, safety tech feature sets are frequently at the junction of competing stakeholder interests. For example, how do we reconcile a child’s right to agency and privacy against school duty of care and parental rights or requests for visibility?

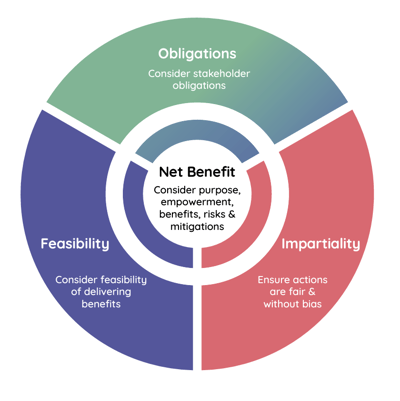

Our Ethical Guidelines

1. Obligations

We must comply with regulatory, statutory and compliance standards and obligations in all the regions where we operate, and support our customers in meeting their own obligations.

2. Impartiality

We do not take a subjective position with respect to rights and obligations of our stakeholders, and do not impose subjective values, attitudes or beliefs through our technology. We seek to ensure our approach to product design, community education and advice to our customers supports choice and is evidence-based.

Our systems are designed to avoid or minimise any opportunity for subjectivity or bias. Attention is paid throughout the design process to addressing inherent possible inequalities or disadvantages to vulnerable communities that may be caused or exacerbated by system design.

We promote the responsible application of our system outputs and aim to upskill our stakeholders with best-practice suggestions on actioning these outputs for the ultimate goal of preserving and protecting children’s safety and wellbeing.

3. Feasibility

Our decisions must be grounded in what is possible in consideration of technical, resourcing and timeline limitations. We seek to only offer features which we are reasonably confident can fulfil their intended purpose.

4. Net-benefit

Our products and services are designed to provide benefits to stakeholders while minimising potential harm to individuals.

An overall net benefit to society is required. Net-Benefit is analysed based on the following principles:

4.1 Good and proper purpose. We build products and services with the intent of making a positive contribution to the world. We are mindful of the potential harm of the use and misuse of them. Our decisions are grounded in achieving common good. Our chosen means to deliver benefits are not intrinsically harmful or wrong (eg. a gross and untargeted invasion of privacy).

4.2 Empowering stakeholders. We believe in the capacity of people to make decisions on matters that affect them. We seek to provide all stakeholders with choices, education and expert advice on the possible, appropriate and ethical use of our products.

4.3 Weighing benefits and risks. Net benefit analysis considers all stakeholders’ benefits and risks. Benefits and risks are considered in terms of assessed likelihood and severity of impact. Mitigations and misappropriations of our systems are relevant factors in consideration of risk.

Ethical Review Process

Our Ethical Guidelines are governed by our Ethics Working Group which meets monthly. Applicable initiatives and reviews are conducted through these steps:

1. Define:

Define the ethical concern and the interests of relevant stakeholders.

2. Contextualise:

Contextualise the concern in relation to our system features or designs, as well as any legal, regulatory and compliance standards applicable to us and/or our customers.

3. Analyse:

Analyse the concern by applying our framework through our working group and in consultation with external experts where considered necessary.

4. Outcome:

Agree on our position including any mitigations.

When do we apply the Ethical Review Process?

Our guiding principles should always underpin our decisionmaking and system development. The circumstances which would instigate an ethical review and the application of this process as outlined are:

• We foresee that stakeholder rights may be in conflict; or

• The requests of one stakeholder may result in risk for another stakeholder, or

• For the purpose of a review of historical design decisions.

For more information

If you have any questions or would like to know more please do not hesitate to contact us.

To receive our Ethical Practices Framework in a pdf

format click here.